Why data may become the key infrastructure for robotics

Recent progress in large AI models has revealed an important pattern: scaling laws.

In language models, performance improves predictably as model size, compute, and training data increase. This observation transformed the AI landscape, shifting focus from algorithmic innovation toward data and infrastructure at scale.

A similar dynamic may now be emerging in robotics and Physical AI.

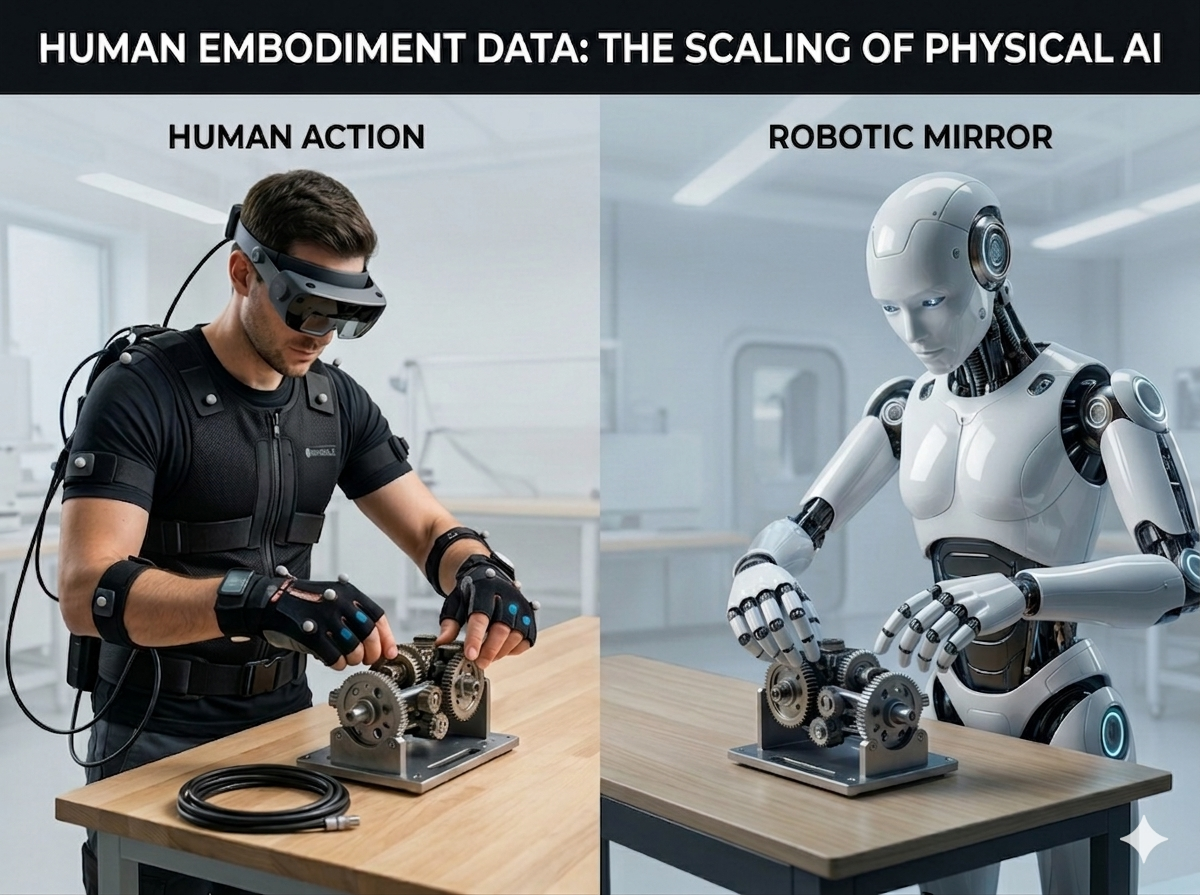

Recent research and industry developments suggest that human embodiment data — large-scale recordings of human motion and interaction with the physical world — may play a central role in training next-generation robotic systems.

If this hypothesis proves correct, robotics could enter a phase where data availability becomes the primary driver of progress.

The Emerging Role of Human Embodiment Data

Robots operating in real-world environments must perform tasks that humans have mastered for decades:

- manipulating objects

- assembling components

- interacting with tools

- navigating complex environments

Traditionally, robot training relied on:

- teleoperation demonstrations

- manually engineered control policies

- limited task-specific datasets

While effective for narrow applications, these approaches do not scale easily across diverse environments and tasks.

Human embodiment data introduces a new paradigm.

Using technologies such as egocentric video capture, motion tracking, and multimodal sensing, it is now possible to record large volumes of human activity data. These datasets capture rich information about:

- motion trajectories

- hand-object interaction

- task sequences

- environmental context

This information can provide valuable priors for training robotic models.

In this sense, human embodiment data may play a role for Physical AI similar to the role internet-scale text played in large language models.

From Algorithms to Data Scaling

Historically, robotics progress has been driven primarily by improvements in algorithms and hardware.

However, once a scalable training paradigm emerges, the competitive landscape often changes.

In many areas of AI, the key bottleneck shifts from model architecture to data scale and infrastructure.

Examples include:

- web-scale text datasets for language models

- large driving datasets for autonomous vehicles

- internet image datasets for computer vision

If Physical AI begins to follow a similar trajectory, access to large-scale embodiment data could become a major strategic advantage.

This shift would fundamentally reshape how robotics systems are developed.

The Data Flywheel of Physical AI

Large-scale human data could also enable a powerful learning cycle for robotics.

Human activity data can be used to pretrain models capable of understanding motion and interaction patterns. These models can then be adapted for robotic systems operating in real-world environments.

Once deployed, robots generate their own operational data, including sensor observations, action outcomes, and environmental feedback.

This creates a data flywheel:

Human embodiment data

→ model training

→ robot deployment

→ robot experience data

→ model improvement

Over time, this loop may significantly accelerate the development of robotic capabilities.

Implications for the Robotics Industry

If embodiment data becomes a core training resource, the robotics ecosystem may evolve toward a more data-centric structure.

In addition to hardware innovation and model development, new infrastructure layers may emerge around:

- large-scale human motion capture

- dataset curation and annotation

- cross-embodiment data alignment

- training data pipelines for robotics models

Organizations capable of building and managing these data infrastructures may play a critical role in the future Physical AI stack.

In other words, the development of Physical AI may depend not only on advances in robotics engineering, but also on the ability to scale high-quality embodiment data.

Building Data Infrastructure for Physical AI

At maadaa.ai, we focus on building data infrastructure that supports next-generation AI models, including emerging Physical AI systems.

Our work explores methods for collecting, structuring, and curating large-scale datasets that enable models to learn from complex human behaviors and real-world environments.

As robotics systems continue to evolve, the ability to generate and manage high-quality training data will likely become a foundational capability across the industry.

Reference

(1) Human Embodiment Data Has a Scaling Law — The GPT Moment for Physical AI

(2) Who Will Own the Data of Physical AI?

https://medium.com/@myschang/who-will-own-the-data-of-physical-ai-6b3f080c6637